Dendory Network

Blog posts and papers about gaming, technology and art.

Greetings, my name is Patrick Lambert and this is my blog. I have over 20 years experience in technology as a Systems Administrator and Solutions Architect, and sometimes I like to write blog posts on this site. My hobbies include Anime, figures collecting and video gaming.

These posts are my own thoughts and not a representation of my employer or any other group.

| Post summary | Preview | |

|---|---|---|

What is fate?How your success comes from equal part luck, a good social environment, and hard work.Created on: 2026-01-01 | ||

Self-hosted Nexus deploymentHow to locally host your own Docker images and Python modules.Created on: 2025-12-24 | ||

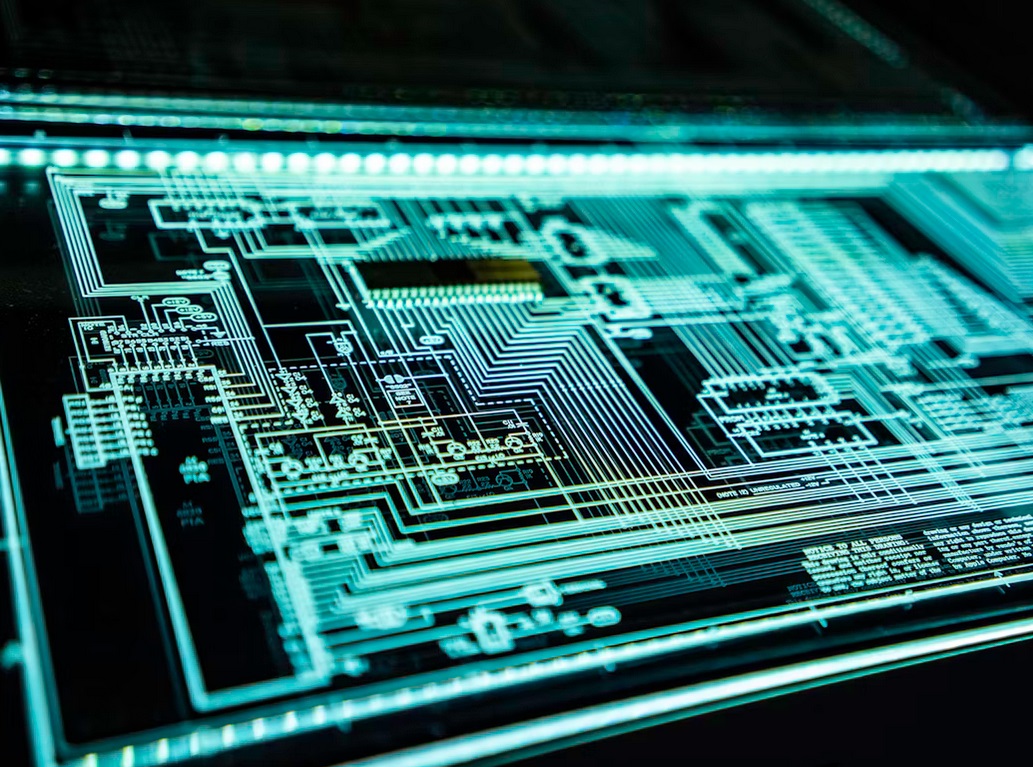

Embracing IT alternativesAre system administrators becoming mere users of technology?Created on: 2025-11-24 | ||

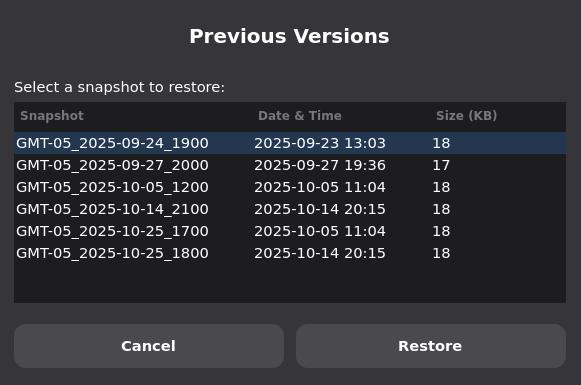

Previous versions on LinuxImplementing volume shadow copy functionality on the Linux desktop.Created on: 2025-10-25 | ||

A world of extremesA look at our polarized society, from politics to online privacy and AI.Created on: 2025-10-08 | ||

What is your threat model?Proper threat modeling is crucial to having a realistic IT infrastructure.Created on: 2025-09-23 | ||

The Sovereign CloudHow could Canada realistically create a sovereign cloud?Created on: 2025-09-14 | ||

Why I became a digital archivist in 2025Some thoughts about the current state of things and what led me to create DataHoarding.orgCreated on: 2025-07-14 | ||

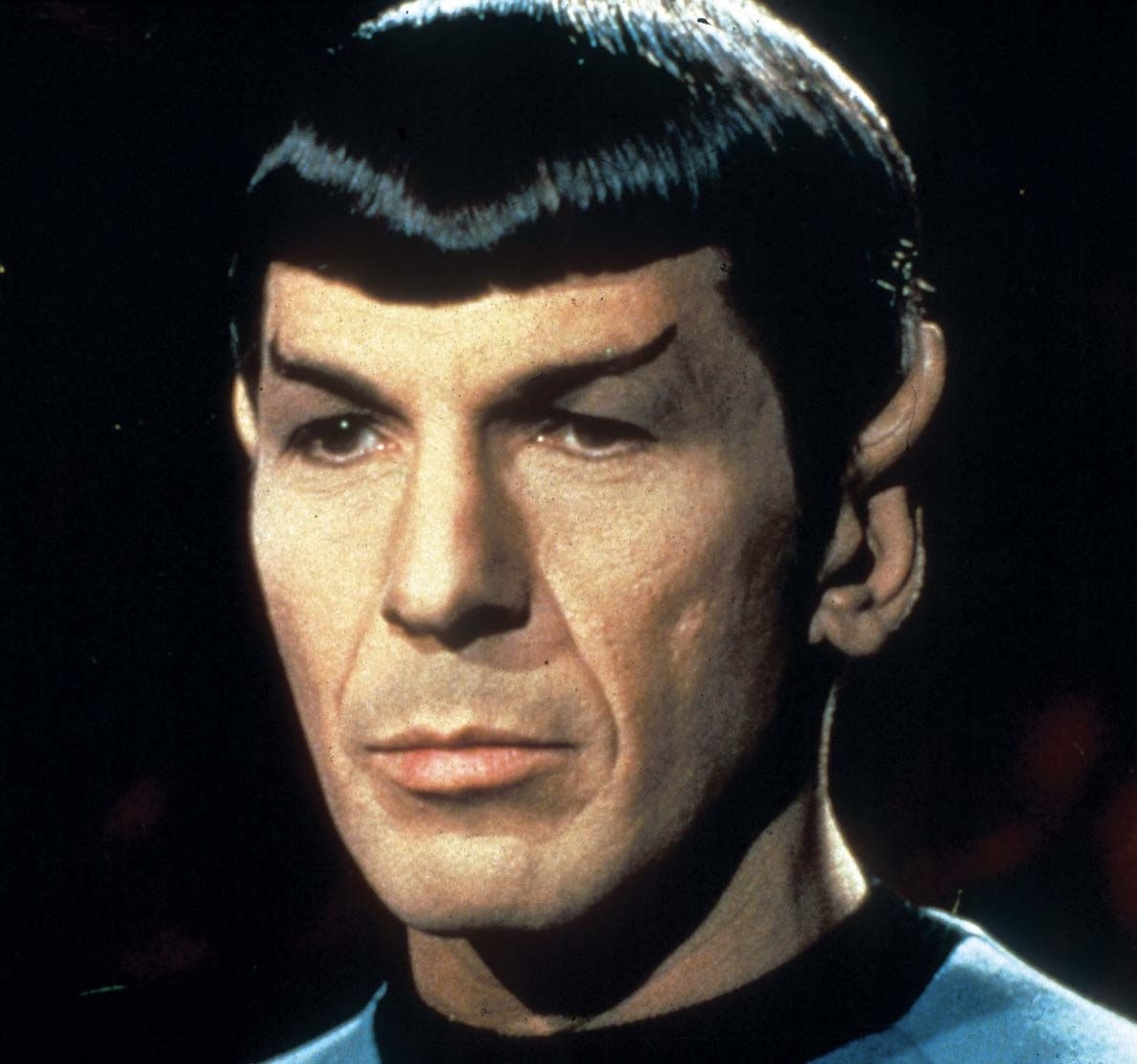

Spock AIGenerating an audio and a video talking head using AI models.Created on: 2025-06-02 | ||

Air flow experimentWill AI models sacrifice people for the greater good?Created on: 2025-05-27 | ||

A homelab primerDesigning, maintaining and scaling up a Proxmox clusterCreated on: 2025-04-20 | ||

Can AI be conscious?Some thoughts about intelligence, our brain and perceptionCreated on: 2025-03-26 | ||

AI Use CasesFrom training to inference, understanding how to deploy AI modelsCreated on: 2025-03-15 | ||

AI Art GenerationAnalyzing stylistic consistency across AI models using ComfyUICreated on: 2025-02-07 | ||

Technological SovereigntyA Canadian Perspective on Decoupling from U.S. Tech GiantsCreated on: 2025-01-28 | ||

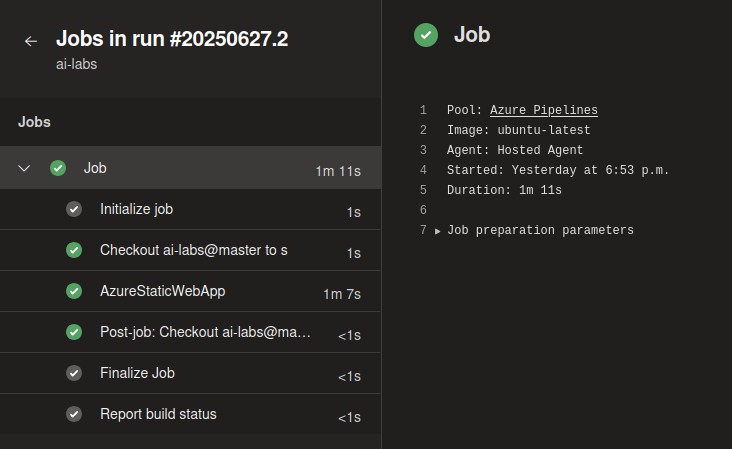

Deploying a static websiteUsing Cloudflare CDN, Azure DevOps and Static Web Site for freeCreated on: 2024-12-30 | ||

Forwarding Proxmox email notificationsKeeping track of your systems using a service called smtp2goCreated on: 2024-12-11 | ||

Building your MP3 music collectionA free way to build a self-hosted music collection using a Flask app, shortcuts and yt-dlpCreated on: 2024-05-01 | ||

Montreal History ProjectA look at one of Montreal's most iconic neighborhoods: GriffintownCreated on: 2024-02-14 | ||

Deploying your Certificate AuthorityGet rid of those self-signed certificates by creating your own chain of trustCreated on: 2024-01-03 | ||

Nginx reverse proxyDeploying a proxy to convert HTTP to HTTPS connections on a range of portsCreated on: 2023-12-22 | ||

Deploying containers with PortainerConfiguring, running and managing Docker containers using Portainer on DebianCreated on: 2023-12-01 | ||

Building a simple Flask appTutorial on building a web app to show some data using the Pandas libraryCreated on: 2023-09-28 | ||

Using Hashicorp VaultSafely storing secrets for Ansible, shell scripts and moreCreated on: 2023-03-19 | ||

Basic Syslog configurationMonitoring multiple Linux hosts using a centralized Syslog serverCreated on: 2021-01-15 | ||

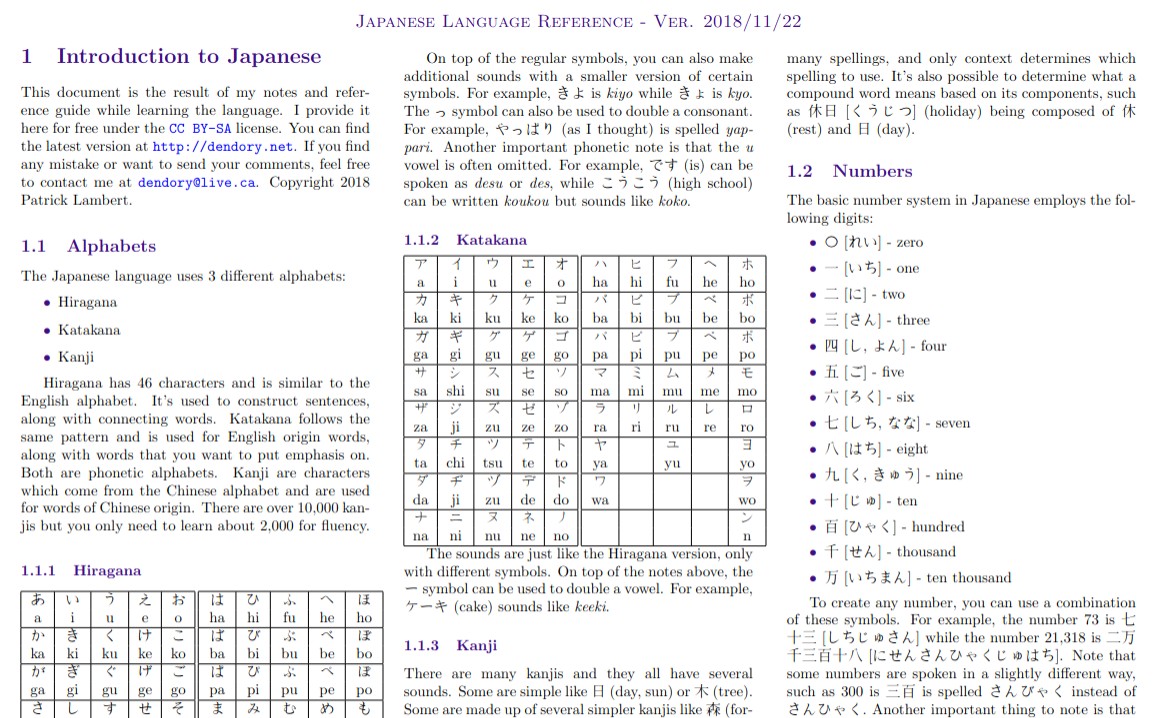

JP cheatsheetStudy sheets for Japanese language learnersCreated on: 2018-11-22 | ||

Custom Apache headerHow to hide the 'Server' header on Apache HTTP 2.4 serverCreated on: 2018-01-23 | ||

Using SQLite with PowerShellA simple guide for accessing SQLite databases in PowerShellCreated on: 2017-03-02 | ||

10 Tenets of a SysAdminA useful set of guidelines that all system administrators should keep in mindCreated on: 2016-08-01 | ||

Windows app without Visual StudioHow to make a .NET Windows app without using Visual StudioCreated on: 2015-12-22 | ||

Deep analysis of a modern web siteA look at how a modern web site is constructedCreated on: 2015-11-28 | ||

Database administration tutorial for non-DBAsHow to take care of a database as an IT professionalCreated on: 2014-11-07 | ||

A quick guide to LaTeXSome notes on how to create documents using the LaTeX languageCreated on: 2014-10-28 | ||

Abstraction of codeA look at code abstraction in a typical Perl frameworkCreated on: 2014-10-15 | ||

ODBC URI schemeAn RFC describing an URI scheme for ODBC connectionsCreated on: 2014-10-08 | ||

Generating Global IDsA strategy for creating global identifiers in codeCreated on: 2014-09-08 | ||

Survival Quick GuideA common sense guide to surviving in any emergency situationCreated on: 2013-08-21 | ||

The story of TideArtThe story of building an art web site to 70,000 page views per monthCreated on: 2013-02-17 | ||

Star Wars TimelineA visual timeline of the history of the Star Wars universeCreated on: 2012-09-21 | ||

Making of AgonyA tutorial of how to combine Poser 7 and Vue 6 to create a 3D renderCreated on: 2011-01-12 | ||

Vue Lighting TutorialHow to achieve realistic lighting in Vue 6Created on: 2010-07-06 | ||